Shadow AI Discovery: Risk Scoring, Staging & One-Click Reconciliation

AI doesn't enter organizations through one front door. It comes in through dozens of them.

A model deployed on AWS SageMaker. An inference endpoint spun up in Azure. A GitHub repository quietly importing an LLM SDK.

A third party tool integrated by a product team on a Tuesday. Each of these is an AI system. None of them may have gone through a governance pathway. And without active detection, your governance team will never know they exist.

That fragmentation is the actual problem. Not a lack of effort. A lack of visibility. Governance teams can't govern what they can't find, and they can't find what's scattered across cloud platforms, code repositories, ML platforms, SaaS tools, and internal systems with no centralized record.

Once visibility is established, three new friction points show up immediately:

- Risk ranking. An internal developer script and a client facing recommendation engine processing sensitive customer data appear in the same list. Nothing distinguishes them by consequence.

- Dedicated queue. Discovered ungoverned assets land alongside governed ones in the main inventory and are hard to distinguish. Governance teams filter and sort instead of making decisions.

- Fast path to governance. Acting on a shadow AI asset meant creating a new inventory item, re-entering metadata, and standing up workflows manually, every time.

Holistic AI has just closed all three gaps with the latest release of our AI governance platform.

What the Holistic AI Governance Platform already does

Shadow AI Discovery in the Holistic AI Governance Platform was built to close the visibility gap. It connects to 50+ sources across your entire infrastructure: cloud platforms, code repositories, ML platforms, LLM providers, agent frameworks, documentation systems, and enterprise SaaS. And, it scans your environment continuously.

When it finds AI, it doesn't just flag individual files. It collects the artifacts, classifies them, maps the relationships between them, and groups everything into a single unified asset record. A model file in S3, a configuration in a repo, an API endpoint in Azure, and a test script in GitLab don't surface as four separate findings. They surface as one AI system, with every artifact attached, ownership identified, and metadata extracted.

Connect. Scan. Cluster. Surface. That foundation hasn't changed. Now, you also get the governance layer on top of it.

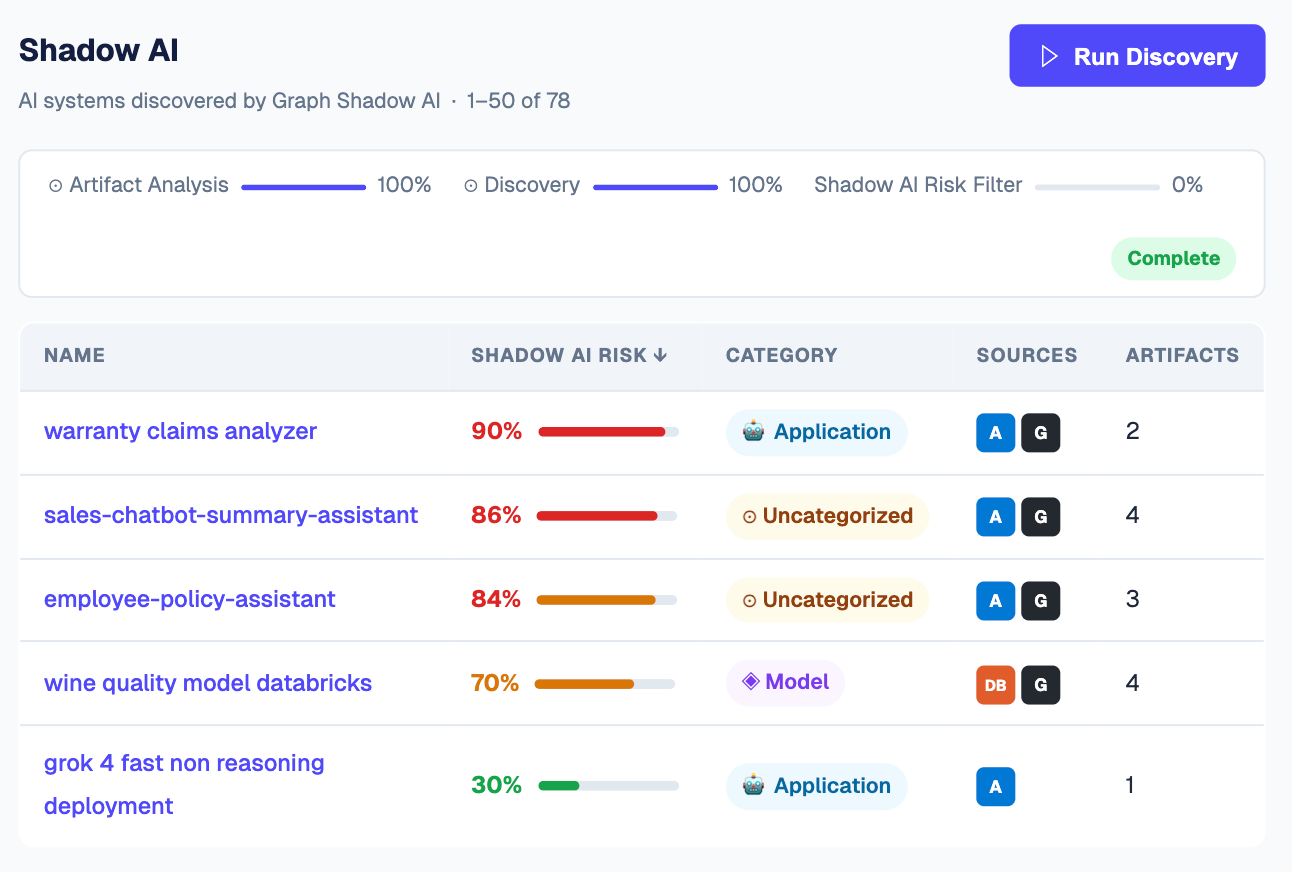

New: Dedicated Shadow AI Tab

Previously, discovered ungoverned assets appeared alongside governed ones in the main inventory. Governance teams had to filter and sort to separate what needed attention from what was already under control. The Shadow AI tab creates a dedicated staging environment, a true working queue, separate from everything already governed.

Every asset in the tab shows three things:

- Source. Where it was discovered: Azure, GitHub, Databricks, or any connected platform.

- Artifact count. How many files and records make up that asset.

- Shadow AI Risk Score. Its consequence ranking, sortable and filterable.

Governance teams open the tab, see exactly what needs a decision, and move on. Nothing is buried in a mixed list.

New: Shadow AI Risk Score

Not all shadow AI carries the same risk. A developer's internal productivity script is a different governance priority than a client facing recommendation engine processing sensitive customer data. Treating every discovered asset with the same urgency burns governance capacity on low stakes work while high stakes assets sit unreviewed.

The Shadow AI Risk Score is a composite KPI that ranks every discovered asset by consequence. It draws on four input dimensions:

A low score means the asset can be reviewed at lower priority. A high score puts it at the top of the queue. Scores are filterable and sortable directly within the Shadow AI tab.

When leadership asks how ungoverned AI is being handled, the answer is no longer a spreadsheet. It's a scored, staged queue with audit history showing what was found, when it was triaged, and how it entered governance.

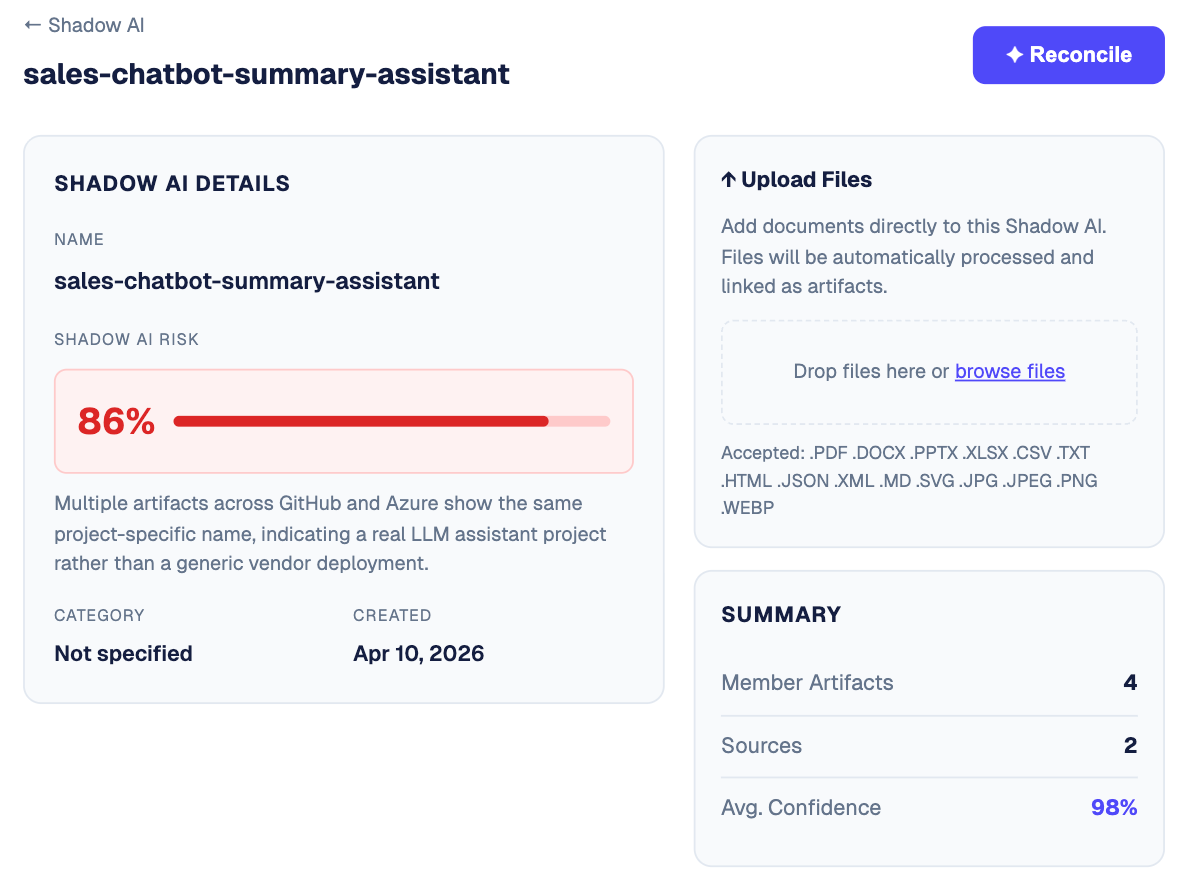

New: Upload & One-Click Reconciliation

Once a governance team reviews a shadow AI asset, acting on it used to mean starting over.: Create a new inventory item. Re-enter the metadata. Stand up a workflow from scratch.

Now, users work directly inside the shadow AI asset record in two steps:

- Upload documentation. Anything gathered offline, received from the asset owner, or exported from another system goes directly into the record before reconciliation.

- Click Reconcile. One click converts the shadow AI asset into a fully governed inventory item. All artifacts, source metadata, and uploaded documentation carry through automatically.

From there, the asset enters standard governance workflows: risk mapping, assurance testing, programmable controls, and ongoing monitoring. No re-entry. No duplication. Reconciled assets are also immediately subject to any active Programmable Controls. If a control triggers on asset creation, newly reconciled shadow AI assets are picked up without any manual task creation required.

New: Discovery Settings & Constraints

Shadow AI is only as good as what it's allowed to see. Discovery Settings give you precise control over what gets included in discovery before the algorithm runs. Think of it as input quality control for your Shadow AI output.

Two constraint types shape what the discovery engine builds from:

- Segregator. Creates processing boundaries. Artifacts on opposite sides of a boundary are treated independently, so teams, business units, or environments don't bleed into each other's clusters.

- Identifier. Drives asset linkage. Artifacts sharing the same identifier value are linked as the same asset, enabling the platform to group scattered components from GitHub, Azure, Databricks, and elsewhere into one coherent record.

The relationship between settings and output is direct. More activity signals and tighter identifier fields produce deeper, more accurate clusters. Broad, unfiltered discovery produces noisier results that take longer to triage.

How Holistic AI Shadow AI Discovery Works End-to-End

From first scan to governed inventory in just five steps, continuously updated.

All artifacts, documentation, and audit history carry through from discovery to governance. Nothing gets lost in the handoff.

Why it matters

Different teams feel this problem differently.

Bottom line

Shadow AI Discovery already solves the visibility problem: finding every AI system across your infrastructure and surfacing it as a single, unified asset. This release solves what comes next. Knowing which assets are highest risk, working through them in a dedicated queue, and bringing them into full governance in one click.

From fragmented data scattered across your infrastructure to a governed, audit-ready inventory. That's the full loop, now closed.