AI That Governs AI: Guardian Agents and the Future of Agentic Governance

As enterprise AI systems evolve from hand coded applications and static AI models to autonomous agents, governance is also entering a new phase. Traditional AI governance approaches enforced in policies, documentation, and periodic reviews were designed for systems that behaved predictably and evolved slowly. That assumption no longer holds.

Agentic AI introduces a fundamentally different operating model because agents can plan, reason, take actions, call external tools, and coordinate across systems in real time. Clearly, governance must adapt, too.

This is a shift toward a new architectural approach: AI designed to govern other AI.

This fundamental shift in governance introduces a new approach called Guardian Agents.

Guardian Agents: The Missing Layer in AI Architecture

Guardian Agents represent a new class of AI governance that provides continuous oversight of autonomous agents and workflows.

They are not assistants.

They are not task agents.

They are supervisory systems

While task-based agents focus on productivity outcomes, Guardian Agents focus on visibility, alignment, safety, and control. They operate alongside AI systems and introduce a dedicated governance layer that can:

- Observe behavior across complex workflows

- Evaluate actions against defined intent and policy

- Intervene in real time when risk thresholds are crossed

This shifts governance from something that happens alongside AI systems to something that happens within them.

Why Traditional Governance Architectures Break Down

Most enterprise AI governance frameworks today are built around three control points:

- Static policy definition

- Pre-deployment risk assessment and validation

- Periodic post-hoc monitoring

These mechanisms assume predictable behavior, predefined workflows, and human-in-the-loop intervention.

Agentic systems break that model. They introduce autonomous runtime processes that:

- Generate new execution paths

- Interact with new tools and data

- Coordinate and take action across distributed systems

This makes governance a real-time systems issue, instead of a periodic checkpoint.

Core Capabilities of Guardian Agents

To improve the runtime efficacy of agentic AI systems, Guardian Agents deliver three tightly integrated capabilities:

1. System-Wide Visibility

Guardian Agents maintain a real-time understanding and visibility of:

- Which agents exist across the environment

- How they interact with humans, tools, data, and other agents

- The sequence of decisions and actions taken

This is not simple logging. It is execution-level observability, often represented as dynamic graphs and workflow traces of agent behavior. This allows organizations to answer a critical question: What are our AI agents actually doing right now?

2. Continuous Evaluation

Guardian Agents continuously assess behavior as it unfolds. This includes:

- Validating alignment with policies

- Detecting safety risks (bias, hallucination, prompt injection, leakage)

- Monitoring performance degradation and drift

- Applying contextual risk scoring based on the situation

Unlike traditional testing, this evaluation is adaptive, ongoing, and predictive—not periodic.

3. Real-Time Enforcement

A defining feature of Guardian Agents is their ability to act in real time. They can:

- Block or filter unsafe outputs

- Interrupt or terminate workflows

- Restrict access to tools or data

- Trigger remediation or escalation

- Enforce policy dynamically across systems

This transforms governance from passive observation into active control.

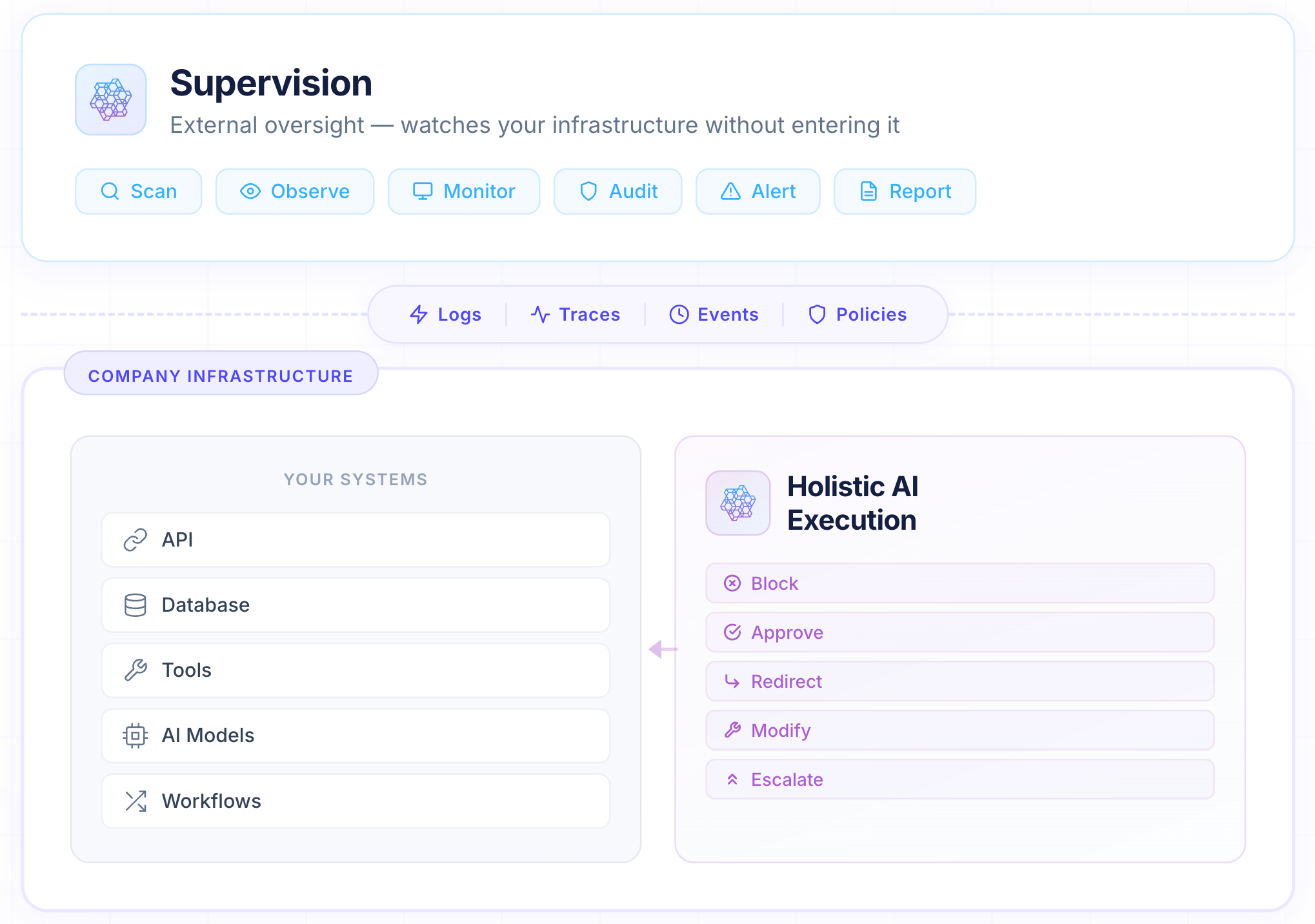

A New Architectural Pattern: Execution + Supervision

Holistic AI Guardian Agents introduce a clear separation of concerns in AI systems:

- Policy Layer: centralized definitions of rules, controls, and thresholds

- Execution Layer: agents that plan, reason, and act

- Supervision Layer: Guardian Agents that continuously monitor and enforce

This pattern creates a governance control plane that sits above and across all AI activity. The benefits are significant:

- Consistent governance across heterogeneous systems

- Centralized visibility in multi-agent environments

- The ability to update governance logic independently of application logic

- Vendor-neutral oversight across models, tools, and clouds

This is particularly important as enterprises move toward multi-agent, multi-vendor ecosystems.

From Human Bottlenecks to Autonomous Oversight

Historically, governance has relied heavily on human oversight. But inserting humans into every decision loop does not scale in environments where:

- Agents operate continuously

- Decisions happen in milliseconds

- Workflows span multiple systems simultaneously

Guardian Agents enable a different model:

- Humans define intent, policies, and thresholds

- Guardian Agents monitor and enforce them continuously

- Humans intervene selectively in high-risk scenarios

This is the transition from human-in-the-loop to human-on-the-loop: AI-mediated oversight with human judgment applied where it matters most.

Design Considerations for Guardian Agent Systems

Building and deploying Guardian Agents introduces new technical considerations:

Cross-System Integration

They must operate across:

- Multiple LLMs and agent frameworks

- Cloud and on-prem environments

- Internal and third-party systems

Latency and Performance

Enforcement must occur in near real time without degrading system performance.

Policy Abstraction

Policies must be both:

- Expressive enough to capture complex intent

- Structured enough to be enforceable programmatically

Trust and Auditability

Organizations must be able to:

- Understand why a Guardian Agent intervened

- Audit decisions and actions via comprehensive logs

- Ensure reliability of the oversight layer itself

The Strategic Shift

As AI systems become more autonomous, governance cannot remain static. The trajectory is clear:

- From lifecycle governance → runtime governance

- From manual oversight → automated supervision

- From fragmented controls → unified governance layers

Guardian Agents are emerging as the operational backbone of this shift. They represent a move toward governance that is:

- Continuous

- Embedded

- Adaptive

- And scalable

Closing Thought

Enterprises are not just deploying AI models. They are deploying systems that reason and act. Ensuring those systems remain aligned, safe, and controllable requires more than policies, audits, and reviews. It requires a new class of infrastructure.

Holistic AI Guardian Agents are that infrastructure - AI systems designed to supervise AI, in real time, at scale.

To learn more about Holistic AI’s Guardian Agents, schedule a demo.