Programmable Controls for Continuous AI Governance

You’ve mapped your AI risks. You’ve built assurance workflows. You’ve run bias audits and red teaming exercises across your portfolio.

But today, all of that still depends on someone remembering to press the button.

That’s where things break down.

- New AI assets slip through without review: A team registers a new application on Tuesday. It sits in the platform, unassessed, until someone notices three weeks later, usually after it's already in production. Assurance runs are manual, inconsistent, and late.

- Your workflow says every client-facing model gets a bias audit quarterly: In practice, it happens when someone has bandwidth. Some assets get tested twice. Others haven't been touched in six months. You can't prove continuous compliance.

- Regulators don't want to hear that you ran a risk assessment once: They want evidence that governance is ongoing, that every asset in scope is being monitored, tested, and reassessed on a schedule you can defend.

Your governance framework isn't the problem. The gap is execution: getting the right tasks to run on the right assets at the right time, automatically.

What's New

Programmable Controls are the automation layer for the Holistic AI Governance Platform. They let you define three things: what should happen, where it applies, and when it runs.

The Holistic AI Governance platform handles the rest. No manual triggers. No missed deadlines. No assets falling through the cracks.

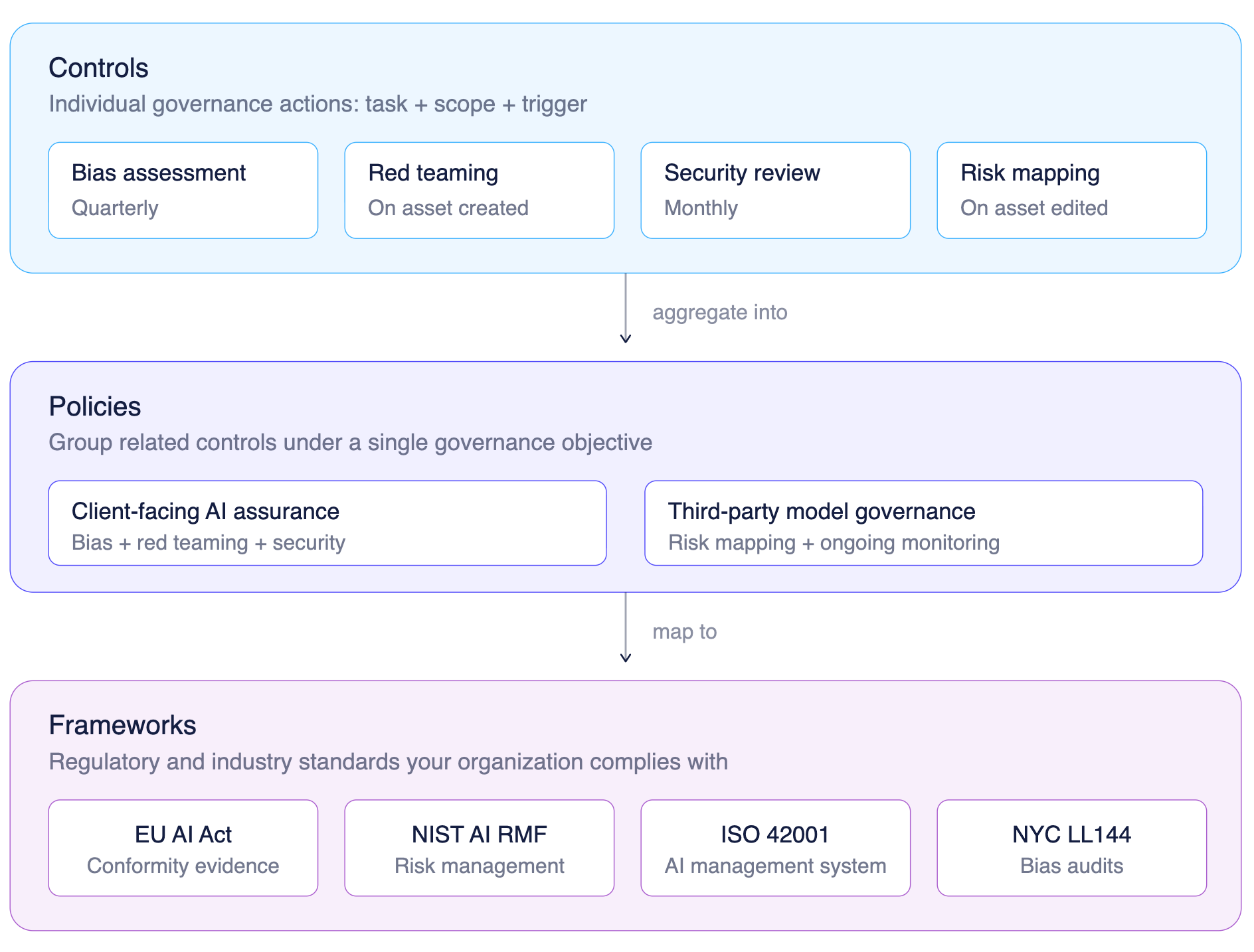

Every control you create connects to the governance hierarchy you've already built. Controls roll up into Policies, which roll up into Frameworks like the EU AI Act, NIST AI RMF, ISO 42001, and NYC LL144. The work you do at the control level automatically feeds the compliance evidence at the framework level.

How Programmable Controls Work

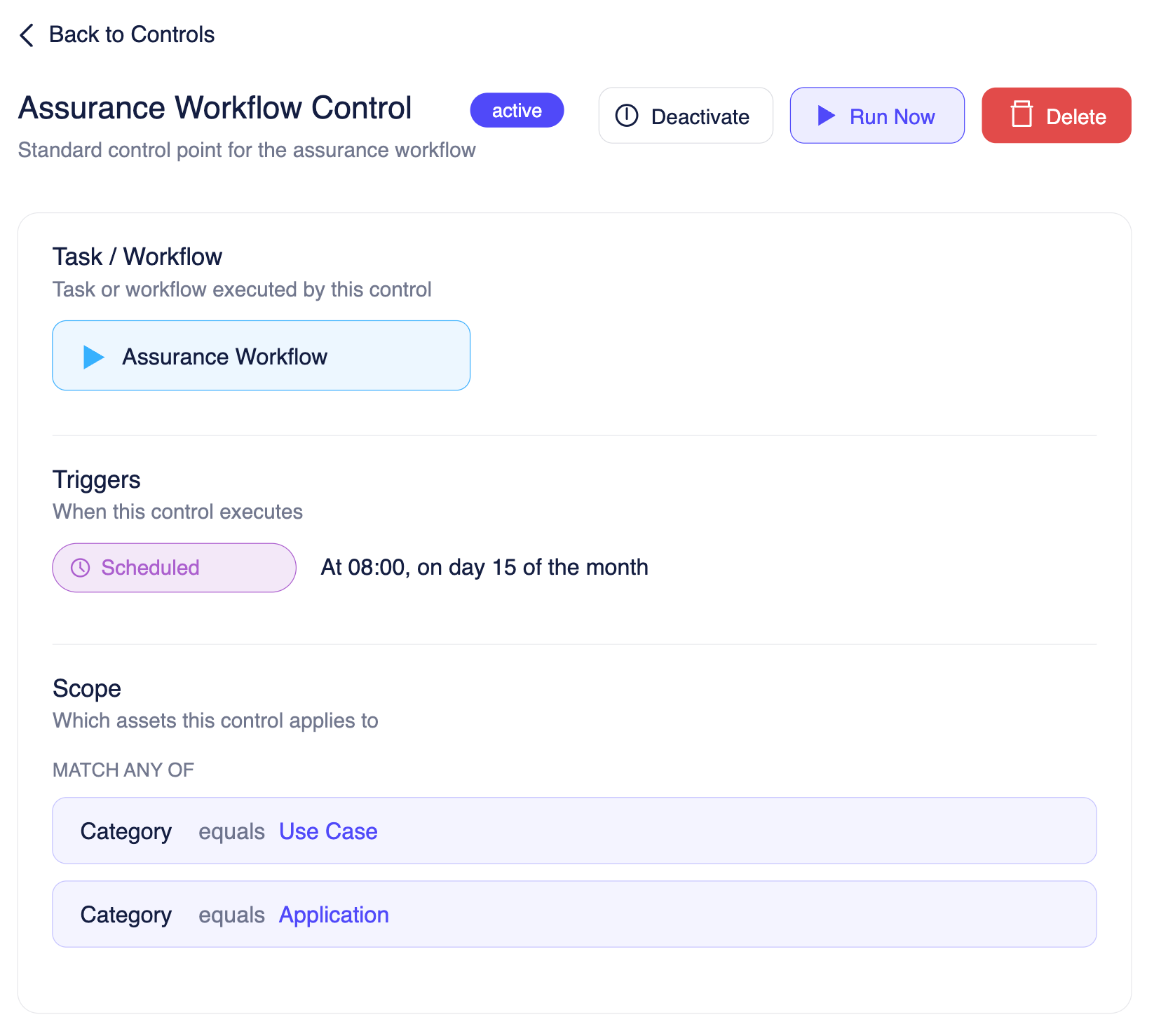

A control has three parts: a task, a scope, and a trigger. You configure each one through a step-by-step wizard, and the platform takes it from there.

Task & Workflow

Every control is linked to a task or workflow, the actual governance action it will execute. The full library includes 50+ tasks across four categories:

- Workflows: Agentic Assurance, EU AI Act compliance, NYC Bias Audit, Assurance Workflow, Agent Graph Workflow, Agent Observability

- Assessments: ML Bias, ML Robustness, ML Efficacy, ML Security, ML Transparency, LLM Bias, LLM Privacy, AI Transparency, Exposure Assessment, EU AI Act Classification

- Testing: Red Teaming, Counterfactual Bias Testing, Jailbreak Testing, Hallucination Testing, Tool Misuse Testing, MMLU, Toxicity

- Mitigations & Reporting: Bias Mitigation, Robustness Mitigation, Mitigation Roadmaps, Assurance Reports, Bias Audit Reports, EU AI Act Conformity Readiness

You pick the task once. The control runs it every time it's triggered, with the same configuration, the same methodology, and the same output format.

What this means for you:

- Assurance work that used to require human initiation now runs on autopilot

- Your Assurance Workflow Control executes the same workflow across every asset in scope

- Every run happens on the same schedule, every time

Scope Rules

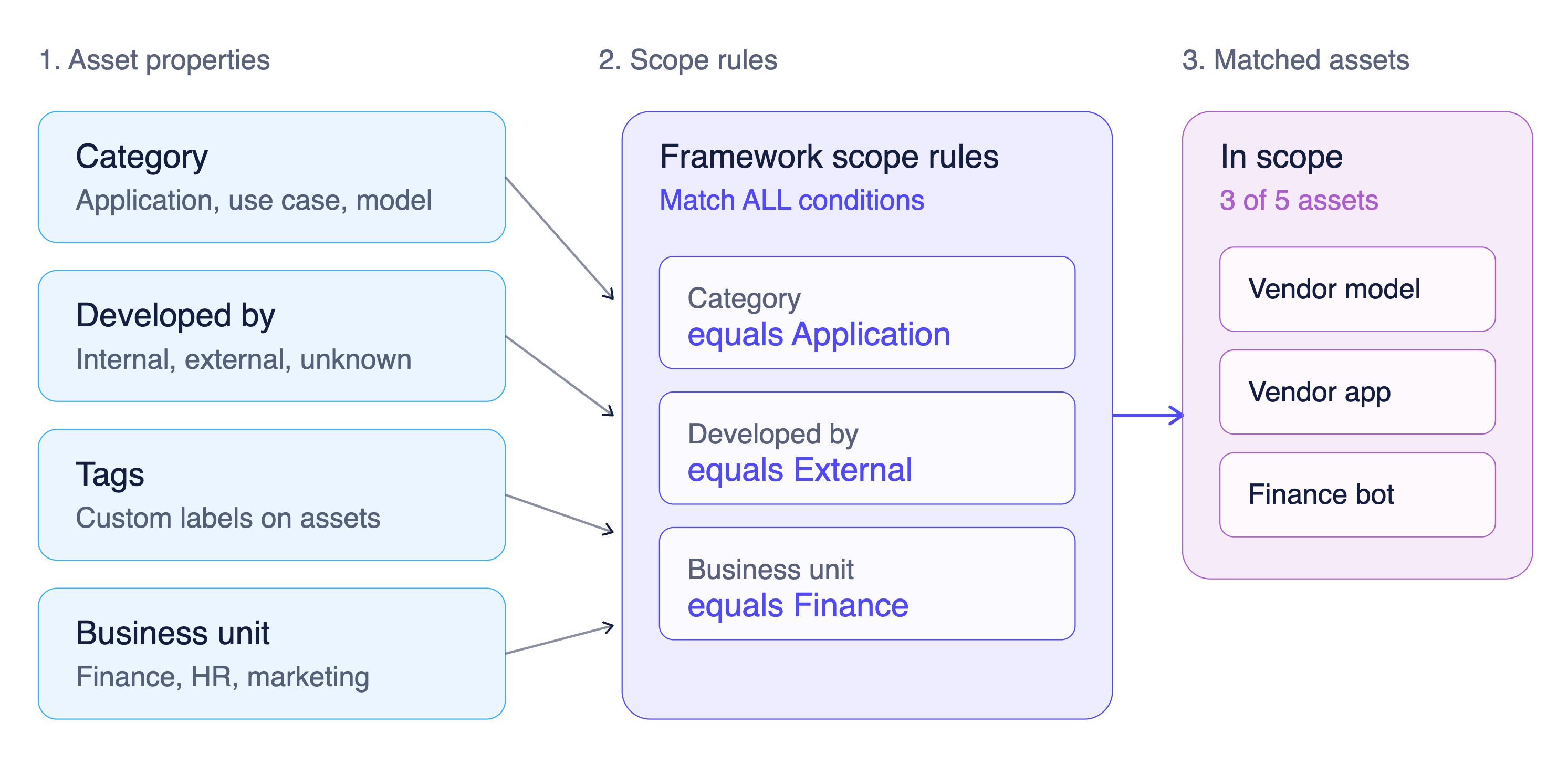

Not every control applies to every asset. Scope rules let you define exactly which assets a control governs, using the same metadata properties you already manage in your AI registry.

You build scope rules with a conditional logic builder. Available properties include:

- Category: Application, Use Case, Model, Platform, Uncategorized

- Developed By: Internal, External, Unknown

- Tags: custom labels you've already applied to your assets

- Business Unit: Finance, HR, Marketing, OperationsCategory equals Application AND Developed By equals External runs only on third-party applications.

- Category equals Use Case OR Category equals Application covers both asset types.

- Business Unit equals Finance AND Tags contains client-facing targets a specific risk profile.

The platform previews your scope in plain language before you save: "Include assets where: (Category equals Use Case OR Category equals Application)."

What this means for you:

- When a new asset gets registered and matches your scope rules, it's automatically assigned to the right controls

- No manual triage.

- No forgotten assets

- The moment something enters your portfolio, governance is already attached.

Triggers

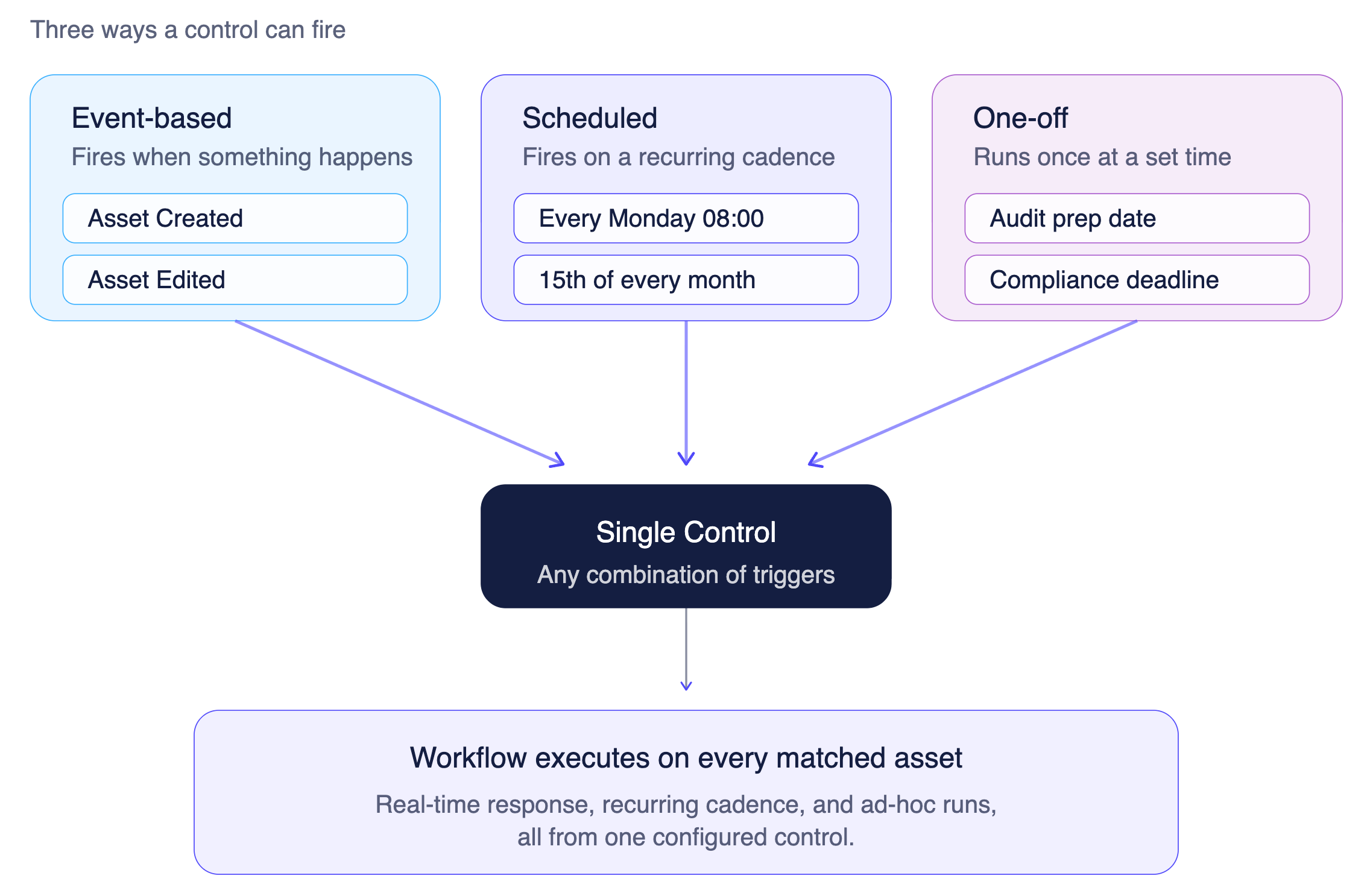

Controls can fire in three ways:

- Event-based: runs automatically when something happens in the platform. Asset Created triggers the control the moment a new asset is registered. Asset Edited re-triggers it on any update.

- Scheduled: runs on a recurring cadence daily, weekly, monthly, or on a specific day. Every Monday at 10:00, or the 15th of every month at 08:00.

- One-off: runs once at a specific time. Useful for audit preparation or deadline-driven compliance tasks

You can combine multiple triggers on a single control. An Assurance Workflow Control might fire on "Asset Created" and "Asset Edited" events plus a weekly Monday morning schedule. Every entry point is covered.

What this means for you:

- Governance runs on your terms, whether that's reacting to changes in real time or following a fixed schedule

- No human stays in the loop

Putting It Together: Scope + Triggers = Conditional Automation

This is where it clicks. When you combine scope rules with triggers, you get conditional governance that responds to what's happening in your portfolio, automatically.

Here's what you can set up in minutes:

Different risk profiles get different governance, and none of it requires someone to manually assign, schedule, or follow up. You build the rules once. The platform enforces them continuously.

AI Autofill

You don't have to configure everything by hand. Write a description of what the control should do, and AI Autofill generates the full configuration: the task, the scope rules, and the triggers.

Review it, adjust if needed, and save.

What this means for you:

- Setting up governance automation takes minutes, not hours

- Describe the outcome in plain language, and the platform does the wiring

Why Continuous AI Governance Requires Programmable Controls

AI governance has shifted. It used to be a periodic exercise: run a risk assessment at launch, file the report, revisit it next year. That model doesn't hold anymore, and regulators, boards, and customers have all noticed.

Three things changed:

- AI portfolios grew faster than governance teams. Most enterprises now manage hundreds of models, applications, and use cases across business units. Manual governance can't scale to that volume, and hiring more reviewers isn't the answer.

- Models change after deployment. Fine-tunes, prompt updates, new data sources, swapped vendors. Every change is a new risk surface. A point-in-time assessment from six months ago tells you almost nothing about the system running today.

- Regulators expect ongoing evidence. The EU AI Act, NIST AI RMF, ISO 42001, and NYC LL144 all assume governance is continuous. "We did a risk assessment once" is no longer a defensible answer to an auditor.

Continuous governance is the new baseline. But continuous doesn't mean "more often." It means structurally automated: every asset, every change, every cycle, captured by the system itself.

When your controls run, audit prep stops being a project and becomes a query.

That's what Programmable Controls do.

- They close the execution gap. Frameworks, policies, and registries describe what should happen. Without an automation layer, none of it actually runs without human effort. Controls turn intent into enforcement.

- They make governance scale linearly with your portfolio. Whether you have 20 assets or 2,000, the same control governs all of them. Your team doesn't grow with your AI footprint.

- They produce evidence as a byproduct of operation. Every run is logged, timestamped, and tied back to the policy and framework it supports. Audit prep stops being a project and becomes a query.

- They adapt to risk profile, not just asset count. Scope rules let you apply different governance intensity to different risk tiers, automatically. High-risk and third-party assets get more scrutiny without anyone having to remember to apply it.

A governance platform without programmable controls is a system of record. A governance platform with them is a system of action. For continuous AI governance, only the second one works.

What You See After a Control Runs

Every control tracks two things: the assets it applies to, and what happened when it ran.

Assigned Assets shows every asset matched by your scope rules, including:

- Asset name and category (application, use case, model)

- Compliance status (Reconciled, Pending, Failed)

- Team assignment

- When it was assigned

- When the control last ran against it

Execution History gives you the full audit trail:

- Asset name

- Run status (running, passed, failed)

- Start time and completion time

- Linked to the specific control and workflow that produced it

When an auditor asks "when was this asset last assessed?", you don't dig through spreadsheets. It's right there.

Controls, Policies, and Frameworks

Controls are the most granular layer of governance in the platform, but they don't exist in isolation.

- Controls define the individual governance action: what task to run, which assets it applies to, and when.

- Policies group related controls together. A policy might be "All client-facing applications must undergo bias and security assurance quarterly." That policy contains multiple controls— one for bias assessment, one for red teaming, one for security review — each with its own scope and schedule.

- Frameworks map to the regulatory or industry standard you're complying with, such as EU AI Act, NIST AI RMF, ISO 42001, or NYC Local Law 144. When your controls run, the evidence they generate flows up through policies into framework-level compliance reporting, automatically.

You configure governance at the control level. You report on it at the framework level. The work is granular. The evidence is aggregated. The whole structure is auditable.

What Changes for Your Team

Different functions feel this differently:

- For compliance and GRC teams: Controls execute on schedule and produce framework-ready evidence without manual follow-up. When the EU AI Act requires ongoing conformity assessment, you point to the execution history. Evidence is timestamped, comprehensive, and running whether or not anyone remembers to ask for it.

- For AI governance leads: Event-based controls catch every new asset the moment it's registered. Assurance workflows run continuously, red teaming runs on schedule, risk mappings happen automatically. Your role shifts from manually driving assessments to overseeing a system that runs itself.

- For security and risk teams: Scope rules filtered by "Developed By equals External" let you apply stricter controls to vendor-supplied models — more frequent red teaming, tighter bias audits, and mandatory security assessments for third-party AI. Different risk profiles get different governance, automatically.

- For engineering and MLOps teams: Stop getting pinged to "run that assessment again." The control fires, the workflow executes, and the results land in the platform. Full visibility into what's been tested and what passed, without context-switching.

Policies that used to describe what should happen now describe what does happen — on schedule, on every asset, with a full audit trail.

Part of the Holistic AI Governance Platform

Controls are the execution layer, powered by everything else in the platform. AI asset discovery finds what needs governing. Risk classification determines the level of scrutiny each asset gets. Controls automate the assurance work. Policies and Frameworks aggregate the evidence. Runtime Monitoring enforces safety and security in real time.

One platform. One governance model. From registration to production.

Ready to put your governance on autopilot? Book a demo →