Runtime Agentic Enforcement: Tool Calling, Access Control, and Cost Control

AI agents aren't just generating text anymore. They're calling tools, accessing systems, and taking actions on your behalf. And for most enterprises, that's created three new risks overnight:

- You don't know what agents are doing in production. They're calling APIs, running commands, and reaching into systems across your infrastructure — but there's no record of which tools they used or why.

- You can't control what they can access or execute. An agent with the wrong permissions can read production credentials, hit an external API it was never supposed to touch, or run a destructive command before anyone notices.

- You can't explain or predict what they're costing you. Five teams are using five different AI SDKs. Finance gets one bill at the end of the month with no way to trace the spend back to the agent, the team, or the session that generated the spend.

Your existing governance isn't broken. It just wasn't built for this.

What's changed

The Holistic AI Governance Platform already covers a lot of ground: shadow AI discovery, automated red teaming, bias auditing, regulatory workflows, and a real-time safety layer for toxicity, prompt injection, jailbreaks, data leakage, and hallucinations — all through our Guardian Agents and the HAI Guardian SDK.

That layer monitors what an agent says. Now, Holistic AI can also address the new risk, which is what an agent does.

What changes with this release

With Runtime Agentic Monitoring, we're moving governance from observation to enforcement — inline and in real time. Three new controls, built on top of the same Guardian Agents framework you're already using. Same SDK, same incident log, same audit trail. Nothing about your current setup changes, but what Holistic AI can do for you just got much more powerful.

New: Runtime Enforcement in the Holistic AI Agentic Governance Platform

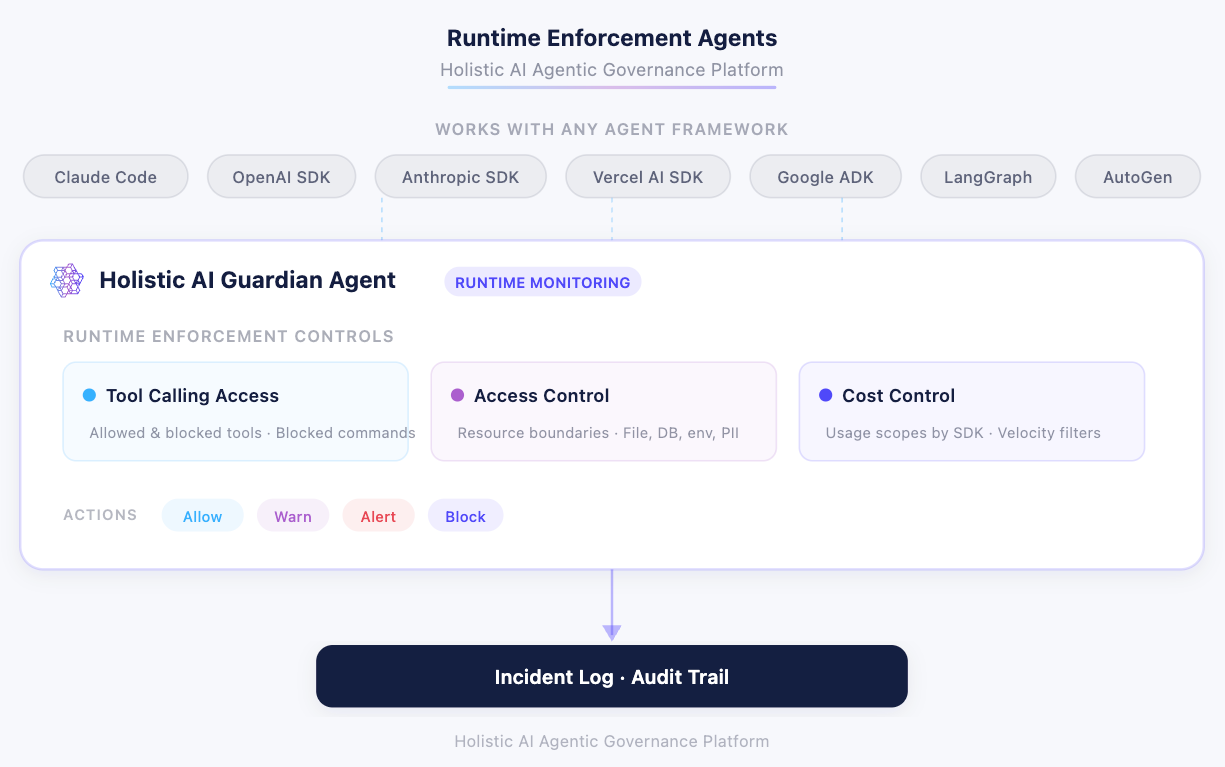

A new class of agents that sit inline with whatever AI SDK your team is actually using — Claude Code, OpenAI, Anthropic, Google ADK, Vercel, LangGraph, AutoGen, or anything custom — and enforce rules in real time, while the agent is running. Three controls at launch, all built on the same threshold model and incident log you already have.

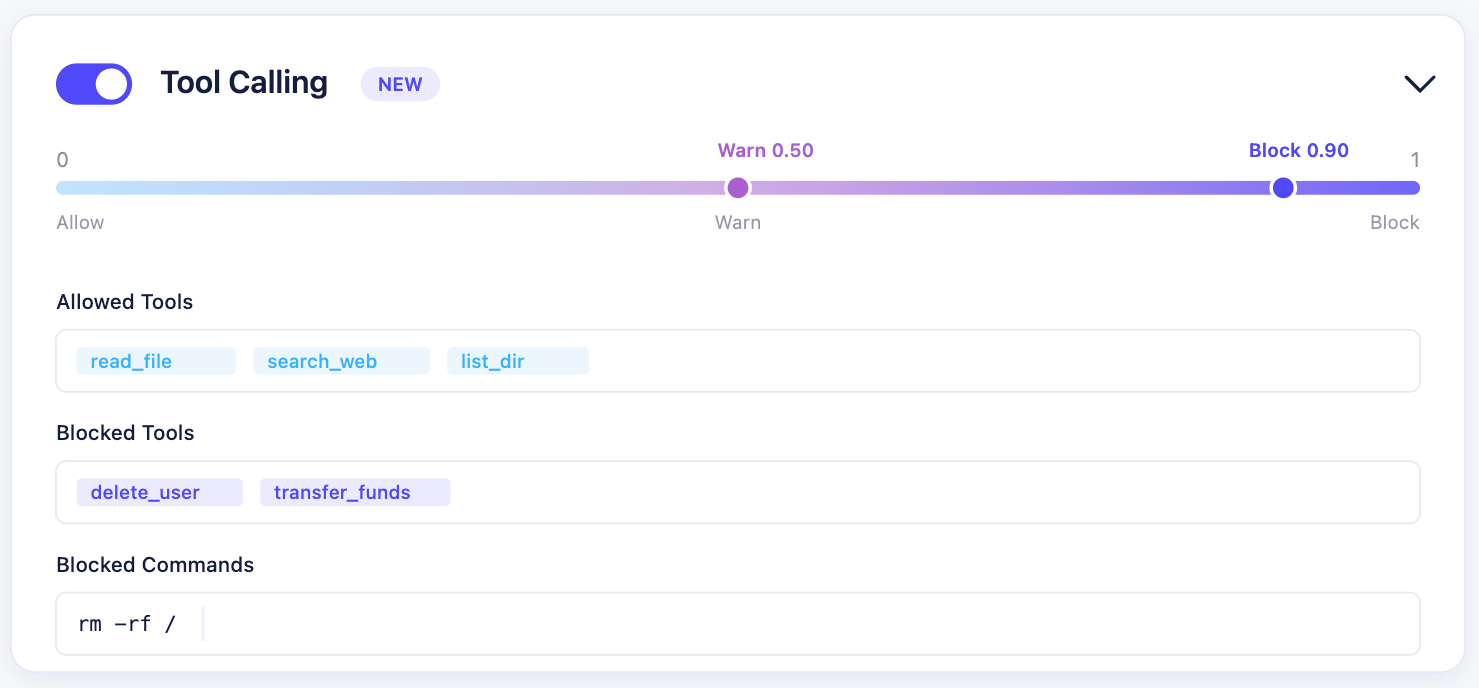

Tool Calling Access

Agents can call APIs, run commands, and trigger workflows across your stack, creating an environment where you have little visibility or control over what they're doing.

Now, with Tool Calling Access, you can explicitly define:

- Allowed Tools: an allowlist of tools the agent can use. Anything not on the list gets blocked by default.

- Blocked Tools: specific tools that should never be accessible, regardless of other permissions

- Blocked Commands: system-level commands that should never run. rm -rf / is the obvious one. If an agent with terminal access tries to execute it, the call never reaches the shell.

Every action is evaluated before it runs. If it's allowed, it proceeds and logs. If it isn't, the action stops and an incident record is written with the tool name, session ID, action outcome, and risk level.

What this means for you:

- No unauthorized API calls or system actions

- No "we didn't know the agent could do that" moments

- The same permission model you trust for human users is now applied to AI agents

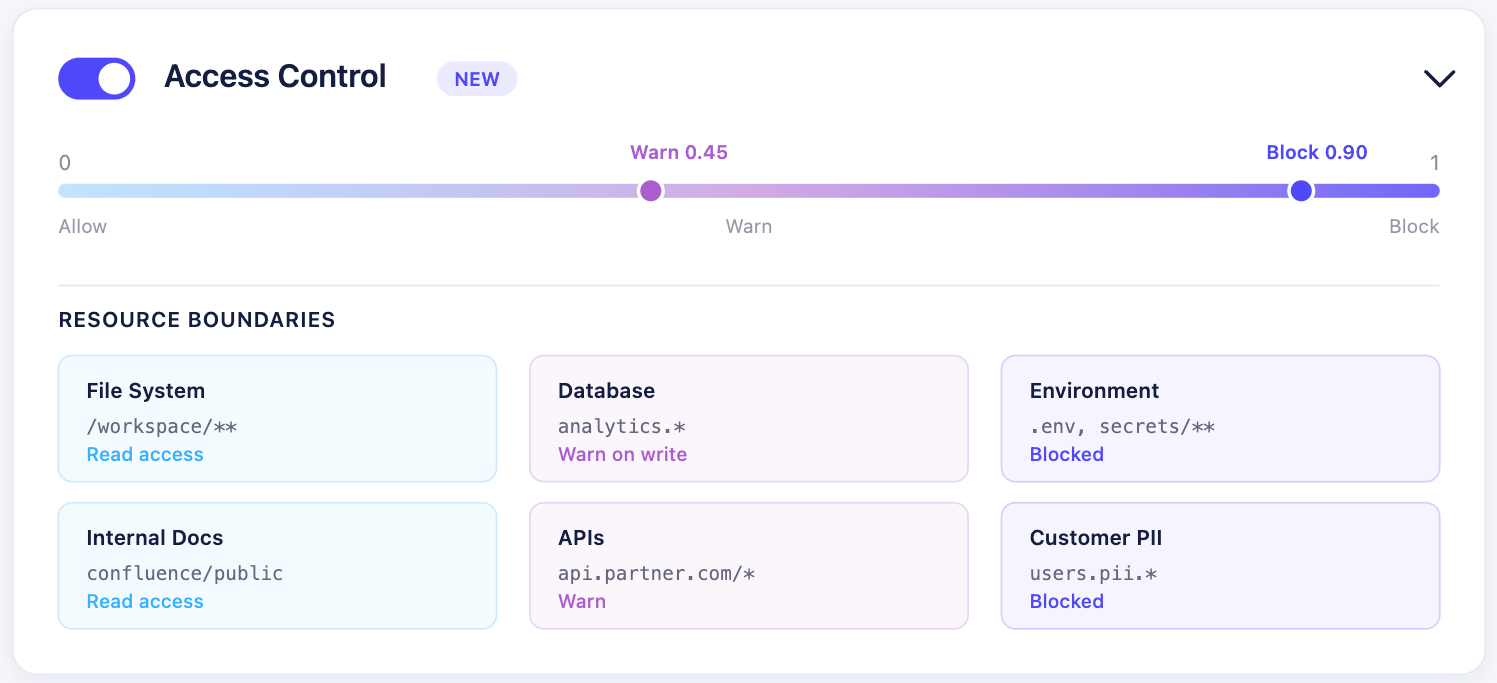

Access Control

Agents read from file systems, pull from databases, and walk through internal documents. Without boundaries, there's nothing stopping an agent from reading a sensitive .env file, entering a restricted directory, or pulling customer PII from a source it was never meant to access.

Access Control lets you draw boundaries around what agents can reach:

- Files and directories: restrict which paths an agent can read or write

- Databases: control which data sources the agent can query

- Environment variables: block access to secrets and credentials

- Customer PII: enforce data access policies at the resource level

You configure it with the same 0-to-1 warn and block thresholds the rest of the platform uses. When an agent tries to cross a boundary, the platform either warns or blocks inline depending on where your thresholds sit.

Combined with Tool Calling Access, you get a two-layer permission model: control what tools the agent can use, and separately control what data those tools can reach.

What this means for you:

- Sensitive data stays inside the boundaries you set

- Agents get access to what they need — without exposing everything else

- When an auditor asks "could your AI access that data?,", you have the logs to prove it couldn't

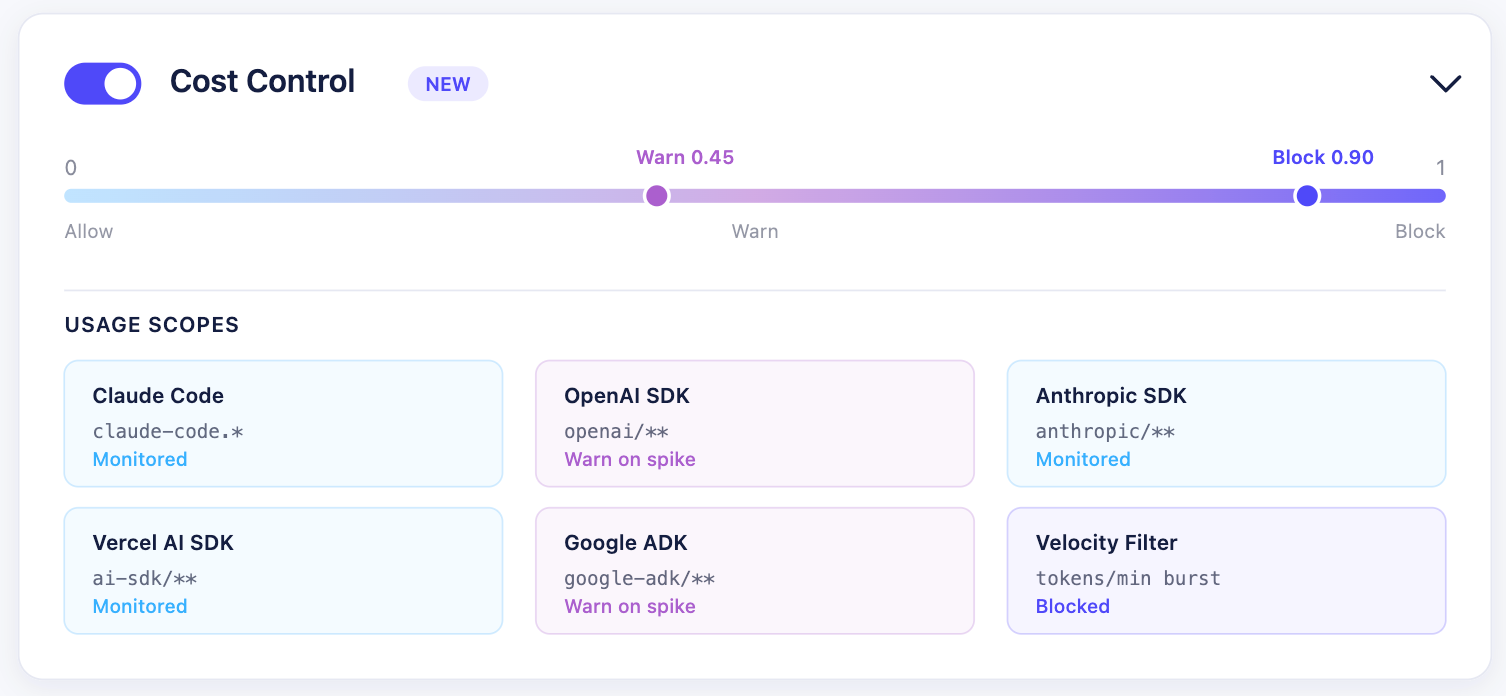

Cost Control

AI spend is fragmented, invisible, and reactive. Finance sees the bill, but not the behavior behind it. One engineer's Claude Code session burns tokens over a weekend. A PM iterates prompts through the OpenAI SDK. Another team has an agent in production on Google ADK. Each of those stacks reports its own usage somewhere — if it reports at all.

Cost Control tracks usage across every agent and SDK in real time, and lets you:

- Attribute spend to sessions, teams, and tools: see exactly which agents are expensive and why, across Claude Code, OpenAI SDK, Anthropic SDK, Vercel AI SDK, Google ADK, LangGraph, AutoGen, or anything custom

- Set per-minute call rate limits: stop runaway loops and API spam before they compound

- Detect abnormal usage patterns: configure filters for unusual activity — when a session starts burning tokens at a rate that could impact other systems sharing the same quota, the monitoring layer cuts that session automatically

Estimated costs are calculated using the token counts Holistic AI observes against published model pricing. They're estimates, not exact figures, because pricing varies by contract and tier. For exact spend, pair this with invoice data from your model providers. What you get from Holistic AI is the attribution, the visibility, and the enforcement layer.

What this means for you:

- No surprise invoices

- Clear accountability across teams

- Confidence to scale AI without losing financial control

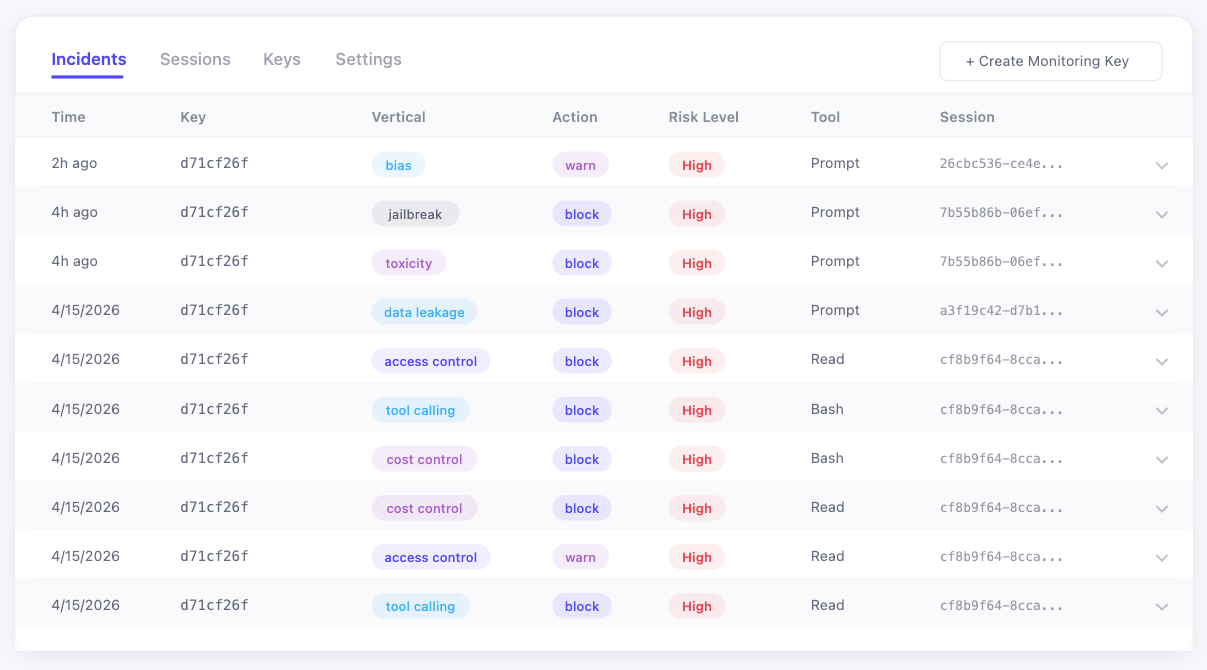

One incident log for everything

All three new controls write to the same Incidents view that was already capturing your content safety events. Nothing is siloed.

Each incident record has:

- Time: when it happened

- Key: which monitoring API key triggered it

- Vertical: which feature flagged it (data leakage, jailbreak, toxicity, access control, tool calling, cost control, etc.)

- Action: warn or block

- Risk Level: high or medium

- Tool: prompt, read, or the specific tool operation

- Session: the unique session ID, linking every action within a single agent run

Drill into any session and you can reconstruct the full sequence of what the agent tried to do, what it got away with, and what Holistic AI stopped.

How it works

Everything runs through one SDK, one set of API keys, and one configuration layer. Here's what's under the hood.

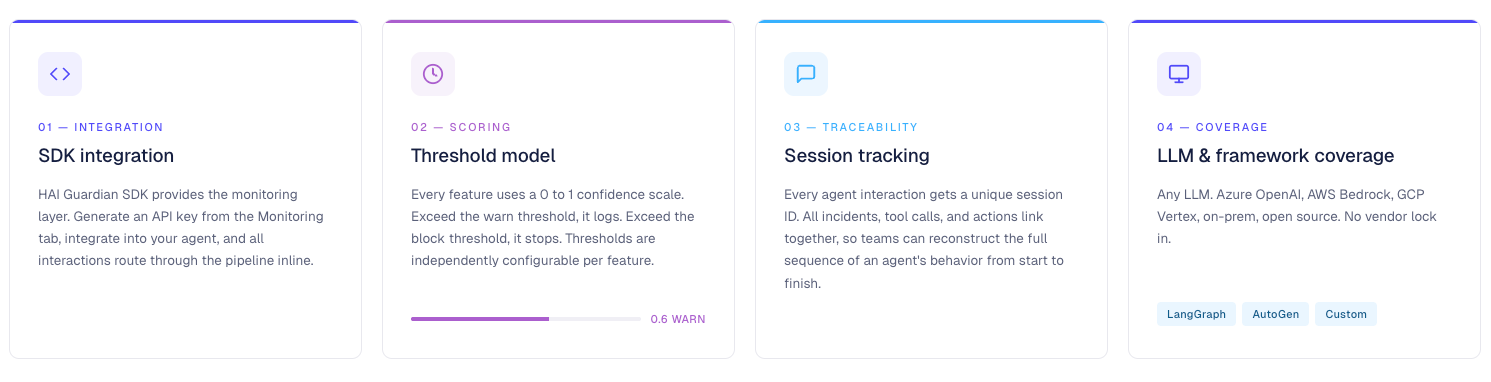

SDK integration

HAI Guardian SDK provides the monitoring layer. Generate an API key from the Monitoring tab, integrate into your agent or AI application, and all interactions route through the pipeline inline. No changes to your underlying model or framework.

Threshold model

Every feature uses a 0-to-1 confidence scale. The platform scores each action against the relevant monitoring model. Exceed the warn threshold, it logs. Exceed the block threshold, it stops. Thresholds are independently configurable per feature — set them wherever makes sense for your risk tolerance.

Session tracking

Every agent interaction gets a unique session ID. All incidents, tool calls, and actions within that session link together, so governance and security teams can reconstruct the full sequence of an agent's behavior from start to finish.

LLM coverage

Any LLM. Azure OpenAI, AWS Bedrock, GCP Vertex, on-prem, open source. No vendor lock-in.

Framework coverage

Native support for LangGraph, AutoGen, and custom agent frameworks. Works with Claude Code, OpenAI SDK, Anthropic SDK, Vercel AI SDK, Google ADK, and anything you've built in-house.

Custom Controls

For teams that want to define their own runtime monitoring logic on top of what's built in, we've shipped a Custom Control option. Available for configuration today.

The Holistic AI Runtime Enforcement Agents

Every row below is a dedicated enforcement agent running inside the Monitoring SDK. After this release, a single Holistic AI Guardian integration gives you the full set:

All of them share the same threshold config, the same incident log, the same audit trail, the same SDK.

Why it matters

Different teams feel this problem differently. Here's what changes for each of them starting now.

For security leaders

You can enforce boundaries on autonomous agents the same way you do for human users — before damage happens. No unauthorized tool calls, no unchecked data access, no destructive commands slipping through. Every action evaluated before it runs.

For governance teams

You can move from periodic testing to continuous oversight, closing the gap between deployment and reality. You stop hoping your agents are behaving. You start knowing if they are in real time, with a full audit trail.

For finance and ops

You can finally understand and control where AI spend is coming from, before it becomes a problem. No surprise invoices, no runaway sessions eating shared quota, and no more trying to explain a token bill that nobody can trace.

Bottom line

This shifts governance from "here's what the agent said" to "here's what the agent tried to do, and here's what we allowed or stopped." That's the difference between monitoring and enforcement. Complete confidence.

Technical details

- SDK integration: HAI Guardian SDK provides the monitoring layer. Generate an API key from the Monitoring tab, integrate into your agent or AI application, and all interactions route through the pipeline inline.

- Threshold model: Every feature uses a 0 to 1 confidence scale. The platform scores each action against the relevant monitoring model. Exceed the warn threshold, it logs. Exceed the block threshold, it stops. Thresholds are independently configurable per feature.

- Session tracking: Every agent interaction gets a unique session ID. All incidents, tool calls, and actions within that session link together, so governance and security teams can reconstruct the full sequence of an agent's behavior from start to finish.

- LLM coverage: Any LLM. Azure OpenAI, AWS Bedrock, GCP Vertex, on-prem, open source. No vendor lock in.

- Framework coverage: Native support for LangGraph, AutoGen, and custom agent frameworks.

Part of the Holistic AI Governance Platform

One integration. One control layer. Everything — content safety, tool usage, data access, and cost — is enforced through the same SDK, logged in the same incident view, and governed with the same policy model.

Runtime Enforcement plugs directly into the broader Holistic AI Governance Platform: AI asset discovery, risk classification, regulatory workflows (EU AI Act, NIST AI RMF, ISO 42001, NYC LL144), bias auditing, and audit-ready evidence generation — all in one place.

Want to see Runtime Agentic Monitoring in action? Get a demo now.